AWS Just Put the Largest Chip Ever Made Inside Bedrock. Cerebras Will Run the Decode. Trainium Will Run the Prefill.

On March 13, 2026, AWS and Cerebras Systems announced a cross-vendor disaggregated inference architecture inside Amazon Bedrock — the first hyperscale deployment to put two competing custom AI chips on the same model and route the workload to whichever one is faster at each step. AWS Trainium handles prefill (compute-bound prompt processing). Cerebras CS-3— the system built around the wafer-scale WSE chip, the largest single piece of silicon ever manufactured — handles decode (memory-bandwidth-bound output generation). The two are stitched together inside AWS data centers via Elastic Fabric Adapter networking on the Nitro System. AWS’s claim: an “order of magnitude faster” than what Bedrock customers can get today. Service rolls out in the second half of 2026.

Two chips. Two different bottlenecks. One token stream.

Inference on a transformer model is two different workloads pretending to be one. First the model has to prefill — read the entire prompt, compute the key/value cache, and warm up the attention state. That phase is highly parallel and compute-bound: lots of matrix multiplications, modest memory traffic per FLOP. Then the model has to decode — emit output tokens one at a time, each one re-reading the entire key/value cache. That phase is serial and memory-bandwidth-bound: modest compute per token, enormous memory traffic. On a single GPU you pay for the worse of the two.

“Each system does what it’s best at.”

“Each system does what it's best at. The result will be inference that's an order of magnitude faster and higher performance than today.”

David Brown · VP, Compute & Machine Learning Services, AWS · March 13, 2026

“Every enterprise around the world will be able to benefit from blisteringly fast inference within their AWS environment.”

Andrew Feldman · Founder & CEO, Cerebras Systems · March 13, 2026

The unsaid context behind both quotes: AWS already has the world’s largest enterprise inference book of business via Bedrock, but its in-house silicon (Inferentia, Trainium) has been outclassed at the decode stage by both Nvidia and Cerebras’s wafer-scale engine. Anthropic — Amazon’s primary Trainium training partner — and OpenAI — which has committed to two gigawatts of Trainium capacity — give AWS the prefill workload at hyperscale already. Adding Cerebras for decode is how AWS closes the speed gap to the GPU economy without acknowledging it had one.

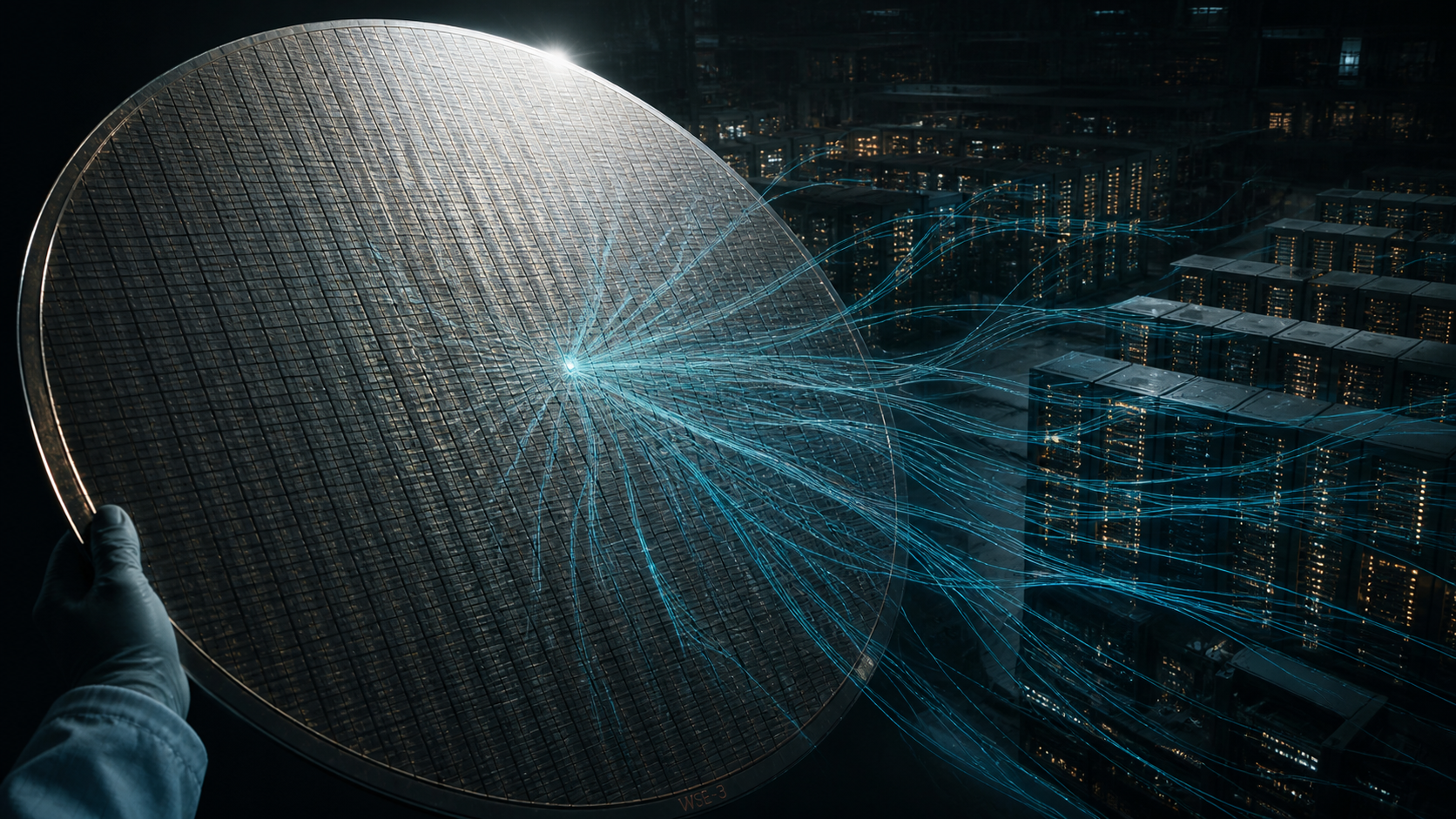

One chip, twelve inches across. All the model weights, on-chip.

The Cerebras Wafer-Scale Engine is the largest single chip ever manufactured. Where TSMC normally cuts ~80 separate die out of a 12-inch wafer, Cerebras keeps the wafer whole and treats it as one chip. The result is enough on-chip SRAM to store the weights of a frontier model without leaving the die. That eliminates the off-chip-memory round trip that gates GPU decode speed. Cerebras’s public benchmarks — Llama 3.1 405B at 969 output tokens/second, Llama 4 Scout at 2,600 tokens/second versus 137 on the leading GPU — are direct consequences of that architectural choice.

What changes inside Bedrock is access. Cerebras already powers fast inference for OpenAI, Cognition, Mistral, and Meta on workloads where token-throughput is the bottleneck — particularly agentic coding, where a single user query can trigger 15× the token volume of a normal chat exchange because the model is autonomously writing, reviewing, and revising code in a loop. Until March 2026 you had to call Cerebras directly to get those speeds. After H2 2026 you call Bedrock.

- ✓Date of announcement: March 13, 2026. Joint press release from AWS and Cerebras Systems.

- ✓Service surface: Amazon Bedrock + AWS Marketplace. Models: open-source LLMs + Amazon Nova.

- ✓Architecture: disaggregated inference. AWS Trainium (prefill) + Cerebras CS-3/WSE (decode), connected via Elastic Fabric Adapter on the AWS Nitro System.

- ✓Performance claim: 'order of magnitude faster' than current Bedrock inference (David Brown, AWS); up to 3,000 output tokens/sec; 25× faster than leading GPU at decode (Cerebras benchmarks).

- ✓Capacity claim: 5× more high-speed token capacity in the same hardware footprint vs all-GPU.

- ✓Existing Cerebras customers: OpenAI, Cognition, Mistral, Meta — primarily for agentic coding workloads where token throughput is the bottleneck.

- ✓Existing Trainium customers: Anthropic (primary AWS training partner); OpenAI (committed to 2 gigawatts of Trainium capacity).

- ✓Launch window: H2 2026 (per AWS), 'in the next couple of months' (per March 13 announcement).

- ?Pricing per output token. Neither AWS nor Cerebras has disclosed how the disaggregated workload will be priced relative to all-Trainium or third-party GPU inference inside Bedrock.

- ?Specific Bedrock regions where the service will launch. AWS has said only 'global data center footprint' over time.

- ?Which open-source LLMs will be available at GA. Llama 3.1 405B and Llama 4 Scout are confirmed Cerebras-supported but not confirmed Bedrock-day-one.

- ?How the latency-arbitrage interacts with Bedrock's existing per-model SLAs and quotas.

- ?Whether Bedrock customers will be able to opt INTO the Cerebras path explicitly, or whether the disaggregation is invisible (AWS routes the workload at request time).

- ?Whether other custom-silicon inference vendors (Groq, SambaNova, Tenstorrent) get equivalent Bedrock integration, or whether Cerebras has an exclusivity window.