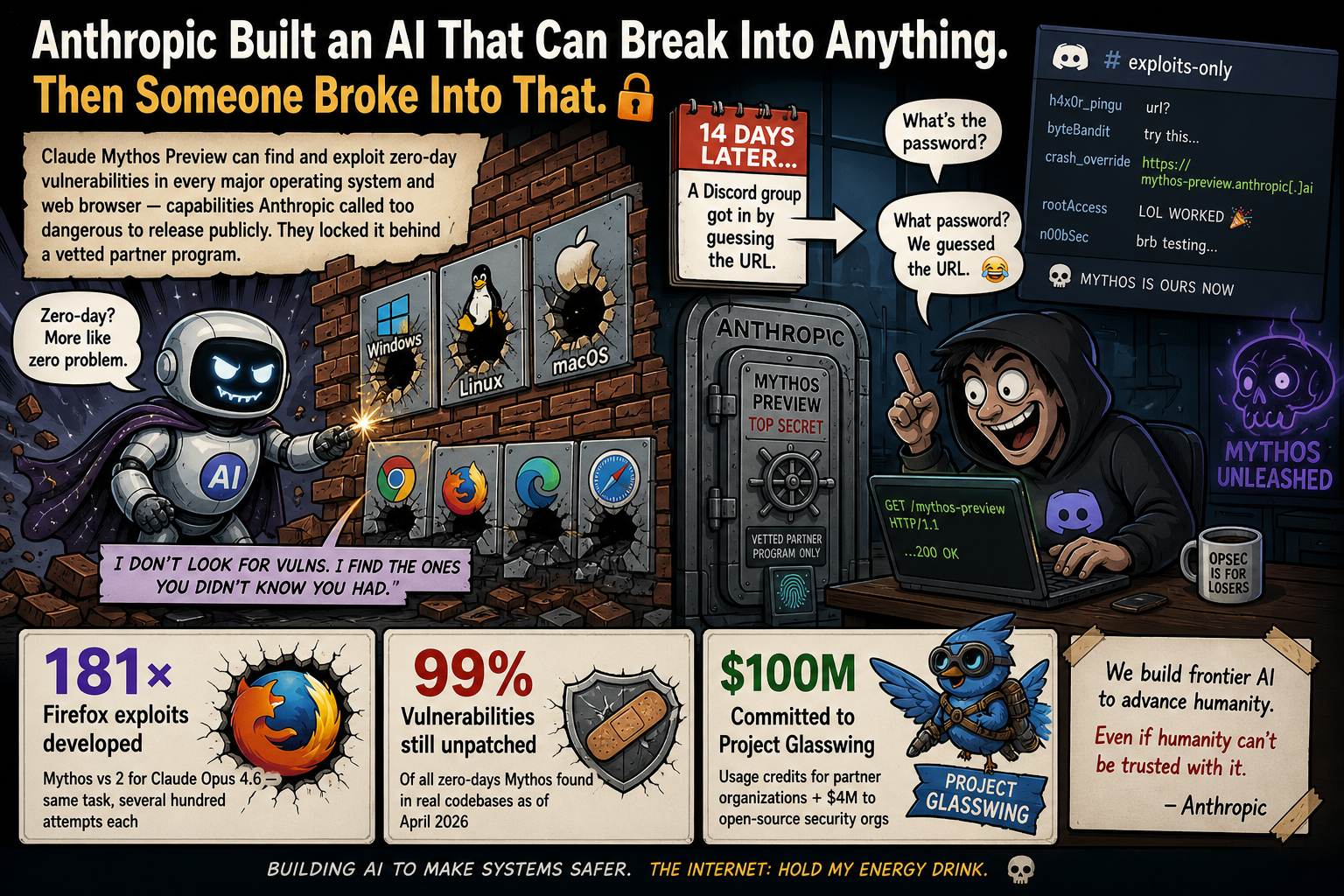

Anthropic Built an AI That Can Break Into Anything. Then Someone Broke Into That.

Claude Mythos Preview can find and exploit zero-day vulnerabilities in every major operating system and web browser — capabilities Anthropic called too dangerous to release publicly. They locked it behind a vetted partner program. Fourteen days later, a Discord group got in by guessing the URL.

It found a 27-year-old bug in OpenBSD. Then a 16-year-old one in FFmpeg. Then a 17-year-old one in FreeBSD. It wrote the working exploit for each.

Claude Mythos Preview, announced April 7, 2026, is a general-purpose language model that Anthropic says is “substantially beyond those of any model we have previously trained.” Most large language models are benchmarked on math, coding, and reasoning. Mythos was benchmarked differently: against real open-source codebases, tasked with finding and exploiting vulnerabilities that human engineers hadn’t found yet.

The results, documented by Anthropic’s own red team, are specific. Mythos produced working Firefox JavaScript engine exploits 181 times across several hundred attempts. The previous frontier model, Claude Opus 4.6, produced two. Mythos achieved a full control-flow hijack — the deepest class of exploit — on ten separate, fully patched OSS-Fuzz targets. Opus 4.6 reached tier 3 vulnerability severity once.

Among Mythos’s specific discoveries: a 27-year-old bug in OpenBSD’s SACK protocol enabling remote denial-of-service; a 16-year-old vulnerability in FFmpeg’s H.264 codec; a 17-year-old FreeBSD NFS remote code execution flaw granting unauthenticated root access (CVE-2026-4747); and memory corruption bugs in cryptography libraries affecting TLS, AES-GCM, and SSH. Anthropic engineers with no formal security background were asking Mythos to find remote code execution vulnerabilities overnight and waking up to a complete, working exploit.

Internal Anthropic benchmark · Source: red.anthropic.com/2026/mythos-preview

Prior to April 2025, no AI model could complete such tasks · AISI evaluation

Absolute figures: 181 working exploits (Mythos) vs 2 (Opus 4.6) · Same test conditions

Anthropic notes the capabilities were not explicitly trained. They “emerged as a downstream consequence of general improvements in code, reasoning, and autonomy” — meaning the exploit-development ability is a side effect of making the model better at programming, not a deliberate design choice.

The first AI withheld from public release since OpenAI held back GPT-2 in 2019.

Anthropic’s stated reasoning: Mythos poses significant near-term security risk during a “transitional period.” Defenders and attackers both benefit from a model that can find and exploit vulnerabilities at this level — but in the immediate window before defensive infrastructure catches up, attackers hold the advantage. Releasing Mythos to the public before that window closes would, in Anthropic’s assessment, be irresponsible.

Anthropic’s long-term projection: defenders will ultimately benefit more than attackers. Security teams can use models like Mythos to find and fix bugs before new code ships. Patch cycles can shorten. Incident response can be automated. But that future is contingent on defenders adopting these tools first — and most organizations aren’t there yet.

“AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure.”

Cisco executive — Project Glasswing launch statement · April 7, 2026

Eleven launch partners. Forty-plus organizations. A $100 million commitment. A controlled channel while the rest of the world waits.

Instead of a public release, Anthropic launched Project Glasswing: a vetted access program giving selected organizations the ability to use Mythos for defensive security work. The stated goal is to enable defenders to begin securing the most critical systems before similar models become widely available — and to establish coordinated vulnerability disclosure standards before the next generation of AI makes the current ad-hoc process unworkable.

Additionally: 40+ organizations maintaining critical software infrastructure — full list not published · Source: anthropic.com/glasswing

Anthropic has also committed to a Cyber Verification Program that will allow legitimate security professionals outside the initial partner group to apply for appropriate access. Post-preview pricing is set at $25 per million input tokens and $125 per million output tokens — significantly above standard rates, reflecting the controlled-access model.

They didn’t need a sophisticated exploit. They guessed the URL.

Fourteen days after Anthropic locked Mythos behind a vetted partner program, Bloomberg reported that an unauthorized Discord community had already been using it regularly. The group — described as “non-malicious and driven largely by curiosity” — gained access through a third-party vendor environment. One member was a contractor for Anthropic. Another key factor: they guessed the URL based on Anthropic’s naming conventions. No sophisticated exploit required.

Before any official announcement, a data leak exposes Anthropic's testing of a new model described internally as a 'step change in capabilities.' Fortune reports on it first.

Anthropic announces Claude Mythos Preview and Project Glasswing simultaneously. Access is restricted to 11 launch partners (Microsoft, Google, Apple, AWS, JPMorgan Chase, Nvidia, and others) plus 40+ organizations. The model is not publicly available.

CEO Dario Amodei meets with White House Chief of Staff Susie Wiles and Treasury Secretary Scott Bessent. Talks described as 'productive and constructive.' German banks and the Bank of England begin separate risk assessments.

Bloomberg reports that a Discord community focused on unreleased AI models gained access through a third-party vendor environment. The group didn't use a sophisticated exploit — they guessed the URL based on Anthropic's naming conventions. A member was a contractor for Anthropic. The group had been regularly using Mythos since launch day.

Euronews, TechCrunch, CyberNews, Fortune, and the Washington Post all cover the breach within 48 hours. Washington Post: 'AI hacking fears jolt Washington as Anthropic unveils Mythos.' Anthropic confirms it is investigating but states: 'There is currently no evidence that Anthropic's systems are impacted, nor that the reported activity extended beyond the third-party vendor environment.'

Security researchers agree the capabilities are real. They disagree about what that means.

No serious researcher disputes Mythos’s capabilities. The benchmarks are Anthropic’s own, but they are specific, falsifiable, and backed by named CVEs and real codebases. The debate is about consequences.

“A large fraction of cybersecurity professors believe this is pretty much what was expected.”

Peter Swire, Georgia Tech · Scientific American, April 2026

“It's a big deal, but it's unlikely to prove to be the end of the world.”

Ciaran Martin, Oxford University · Scientific American, April 2026

Both Swire and Martin noted that regulatory alarm partly reflects institutional incentives — agencies and advisory bodies benefit from emphasizing risk. Martin suggested that the actual expected harm was lower than the most alarming predictions. A Fortune analysis reached a similar conclusion from the industry side: the real bottleneck isn’t finding vulnerabilities, it’s fixing them. Patch cycles at most organizations are measured in months or years, not hours. Until that changes, the leverage from Mythos-class capabilities may be more limited than feared.

“I don't want AI turned on our own people.”

Dario Amodei, Anthropic CEO · Financial Times Weekend, 2026

David Sacks, in early commentary, speculated that Anthropic’s real motive for withholding Mythos was a compute shortage — “by holding it back, they create this impression of scarcity and altruism.” He later gave Anthropic the benefit of the doubt on the cybersecurity concerns. The White House meeting with Dario Amodei — attended by Chief of Staff Susie Wiles and Treasury Secretary Scott Bessent — suggests the administration takes the risk seriously regardless of Sacks’s initial skepticism.

Mythos didn’t create the AI cyberthreat era. It arrived in the middle of one.

Before Mythos was announced, AI-powered attacks were already accelerating. IBM’s X-Force 2026 Threat Index found a 49% increase in active ransomware groups year-over-year, driven partly by AI tooling that allows smaller, less technically sophisticated operators to run credible campaigns. Attackers are using AI to scan networks at 36,000 probes per second, generate phishing emails (82.6% are now AI-generated per IBM), and compress dwell time inside compromised networks from 9 days to 5.

Anthropic’s own advice to defenders is urgent but practical: adopt the current frontier models (Opus 4.6 and similar) for vulnerability-finding immediately, shorten patch cycles, automate incident response, and build familiarity with language models as security tools before Mythos-class capabilities become the industry standard. The window where defenders hold an asymmetric advantage is open. It won’t stay open.

GPT-5.5 ships publicly. On the U.K. government’s cyber eval, it scores higher than Mythos.

OpenAI released GPT-5.5 on April 23, 2026 — sixteen days after Mythos was announced and two days after the Discord breach story broke. It is publicly available to anyone with an API key. On the U.K. AI Security Institute’s standardized offensive cybersecurity battery, GPT-5.5 scores 71.4% — 2.8 points above Mythos’s 68.6%. GPT-5.5 also completed AISI’s 32-step corporate-network attack simulation in 2 of 10 attempts. Mythos managed 3 of 10 — the only two models ever to finish it.

This sharpens the core question Mythos raises: if GPT-5.5 matches or slightly exceeds Mythos on a government-administered cybersecurity test and is publicly available, what does restricting Mythos actually accomplish? The answer lies in test design. The AISI runs a standardized battery built for cross-model comparison. Anthropic’s internal tests involve real open-source codebases and actual zero-day discovery — and on those, Mythos shows a qualitatively different capability: 181 Firefox exploits, tier-5 control-flow hijack on ten targets, CVEs in codebases nobody had audited in 16–27 years. That capability profile did not appear in the AISI battery. GPT-5.5 received a “High” (not “Critical”) classification under OpenAI’s Preparedness Framework — defined as not yet able to develop zero-day exploits autonomously without human help. That is precisely what separates it from Mythos. For now.

Source: Scale AI leaderboard · May 2026

Source: Multiple independent evaluations · April–May 2026

Source: AISI evaluation published May 2026 · aisi.gov.uk

“GPT-5.5 is here, and it's no potato. It narrowly beats Anthropic's Mythos Preview on Terminal-Bench 2.0 — and it's available to the public.”

VentureBeat · April 24, 2026

Anthropic (official) — Dario Amodei statement on discussions with the Department of War · April 2026

FT Weekend — Dario Amodei: 'I don't want AI turned on our own people'